Explaining Self-Supervised Image Representations with Visual Probing

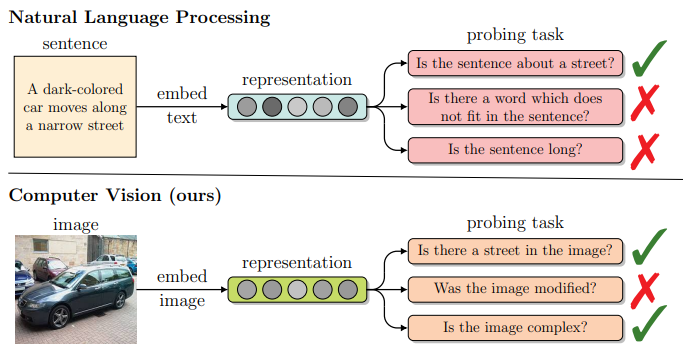

Probing tasks, widely used in natural language processing, validate if a representation implicitly encodes a given property. We introduce a visual taxonomy along with the corresponding probing framework that allow to build analogous visual probing tasks and explain the self-supervised image representations

Probing tasks, widely used in natural language processing, validate if a representation implicitly encodes a given property. We introduce a visual taxonomy along with the corresponding probing framework that allow to build analogous visual probing tasks and explain the self-supervised image representations

Abstract

Recently introduced self-supervised methods for image representation learning provide on par or superior results to their fully supervised competitors, yet the corresponding efforts to explain the self-supervised approaches lag behind. Motivated by this observation, we introduce a novel visual probing framework for explaining the self-supervised models by leveraging probing tasks employed previously in natural language processing. The probing tasks require knowledge about semantic relationships between image parts. Hence, we propose a systematic approach to obtain analogs of natural language in vision, such as visual words, context, and taxonomy. We show the effectiveness and applicability of those analogs in the context of explaining self-supervised representations. Our key findings emphasize that relations between language and vision can serve as an effective yet intuitive tool for discovering how machine learning models work, independently of data modality. Our work opens a plethora of research pathways towards more explainable and transparent AI.